Atmospheric Mercury Has Declined — But Why?

The amount of gaseous mercury in the atmosphere has dropped sharply from its peak in the 1980s and has remained relatively constant since the mid 1990s. This welcome decline may result from control measures undertaken in western Europe and North America, but scientists who have just concluded a study of atmospheric mercury say they cannot reconcile the amounts actually found with current understanding of natural and manmade sources of the element.

An international group of scientists, led by Franz Slemr of the Max Planck Institute for Chemistry [Max-Planck-Institut fuer Chemie] in Mainz, Germany, studied the worldwide trend of total gaseous mercury at six sites in the northern hemisphere, two sites in the southern hemisphere, and on eight ship transatlantic ship cruises since 1977. They have published their findings in Geophysical Research Letters, a journal of the American Geophysical Union.

The fixed sites ranged from the Canadian Arctic to Antarctica. In both hemispheres, total gaseous mercury increased in the late 1970s, apparently peaked in the late 1980s, decreased to a minimum in the mid 1990s, and has remained relatively constant since then. Concentrations in the southern hemisphere are about one-third less than in the northern hemisphere. These observations accord well, the researchers say, with data on mercury deposited in peat bogs and found in ice cores.

Scientists have believed that natural processes and human activities put about equal amounts of mercury into the atmosphere. Assuming that natural emissions and re-emissions of the historically deposited mercury have remained constant, the observed reduction of about 17 percent in concentration from 1990 to 1996 would have to result from a reduction of about 34 percent in manmade emissions during that period. This, the scientists say, is three to four times larger than the 10 percent decrease in manmade emissions suggested by previous studies. Therefore, either our understanding of manmade emissions or of the ratio of natural to manmade emissions probably has to be refined, they say.

The level of atmospheric mercury is important, even though at current levels, it is not directly toxic. The problem, says Slemr, “is that some 5,000 metric tons of atmospheric mercury are currently deposited worldwide every year. The atmospheric lifetime of elemental mercury is about one year and, thus, the mercury is deposited even in remote areas.”

Further, Slemr says, some of the atmospheric mercury is deposited into soil and water, where it can be “transformed to methyl mercury, one of the most toxic compounds.” In ocean water, methyl mercury concentrates in plankton and further accumulates in fish, especially those high in the food chain, such as tuna. High methyl mercury levels in tuna can lead to chronic diseases in persons who eat the fish, with pregnant women most in danger.

Therefore, the researchers say, it is essential that we better understand the amount and sources of mercury in the atmosphere. The amount of mercury emitted naturally is not well understood at present. With regard to manmade emissions, coal burning definitely emits mercury, and it was recently discovered that biomass burning is another important source. Waste incineration is also a source, but not yet well quantified. Further, says Slemr, the annual re-emission of a small fraction of the 200,000 metric tons of mercury deposited into the environment since Roman times is uncertain.

Slemr and his colleagues conclude that future emission inventories must take into account the difference between atmospheric mercury levels in the northern and southern hemispheres, as well as the historic and present day emission trends. Further research will be necessary with regard to the quantitative and qualitative sources of atmospheric mercury, both natural and manmade, for any emission inventory to be credible.

The study was funded in part by the Deutsche Forschungsgemeinschaft.

Media Contact

All latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

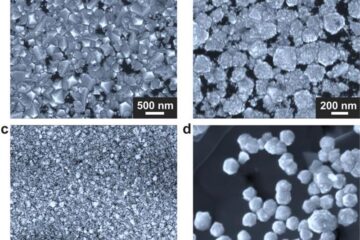

Making diamonds at ambient pressure

Scientists develop novel liquid metal alloy system to synthesize diamond under moderate conditions. Did you know that 99% of synthetic diamonds are currently produced using high-pressure and high-temperature (HPHT) methods?[2]…

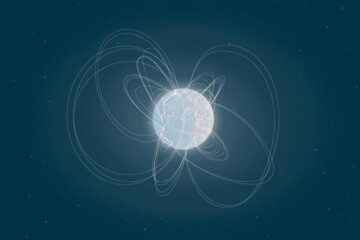

Eruption of mega-magnetic star lights up nearby galaxy

Thanks to ESA satellites, an international team including UNIGE researchers has detected a giant eruption coming from a magnetar, an extremely magnetic neutron star. While ESA’s satellite INTEGRAL was observing…

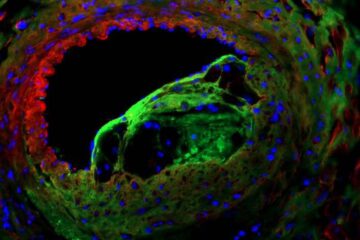

Solving the riddle of the sphingolipids in coronary artery disease

Weill Cornell Medicine investigators have uncovered a way to unleash in blood vessels the protective effects of a type of fat-related molecule known as a sphingolipid, suggesting a promising new…