Climate sensitivity to CO2 more limited than extreme projections

Authors of the study, which was funded by the National Science Foundation and published online this week in the journal Science, say that global warming is real and that increases in atmospheric CO2 will have multiple serious impacts.

However, the most Draconian projections of temperature increases from the doubling of CO2 are unlikely.

“Many previous climate sensitivity studies have looked at the past only from 1850 through today, and not fully integrated paleoclimate date, especially on a global scale,” said Andreas Schmittner, an Oregon State University researcher and lead author on the Science article. “When you reconstruct sea and land surface temperatures from the peak of the last Ice Age 21,000 years ago – which is referred to as the Last Glacial Maximum – and compare it with climate model simulations of that period, you get a much different picture.

“If these paleoclimatic constraints apply to the future, as predicted by our model, the results imply less probability of extreme climatic change than previously thought,” Schmittner added.

Scientists have struggled for years trying to quantify “climate sensitivity” – which is how the Earth will respond to projected increases of atmospheric carbon dioxide. The 2007 IPCC report estimated that the air near the surface of the Earth would warm on average by 2 to 4.5 degrees (Celsius) with a doubling of atmospheric CO2 from pre-industrial standards. The mean, or “expected value” increase in the IPCC estimates was 3.0 degrees; most climate model studies use the doubling of CO2 as a basic index.

Some previous studies have claimed the impacts could be much more severe – as much as 10 degrees or higher with a doubling of CO2 – although these projections come with an acknowledged low probability. Studies based on data going back only to 1850 are affected by large uncertainties in the effects of dust and other small particles in the air that reflect sunlight and can influence clouds, known as “aerosol forcing,” or by the absorption of heat by the oceans, the researchers say.

To lower the degree of uncertainty, Schmittner and his colleagues used a climate model with more data and found that there are constraints that preclude very high levels of climate sensitivity.

The researchers compiled land and ocean surface temperature reconstructions from the Last Glacial Maximum and created a global map of those temperatures. During this time, atmospheric CO2 was about a third less than before the Industrial Revolution, and levels of methane and nitrous oxide were much lower. Because much of the northern latitudes were covered in ice and snow, sea levels were lower, the climate was drier (less precipitation), and there was more dust in the air.

All these factor, which contributed to cooling the Earth's surface, were included in their climate model simulations.

The new data changed the assessment of climate models in many ways, said Schmittner, an associate professor in OSU's College of Earth, Ocean, and Atmospheric Sciences. The researchers' reconstruction of temperatures has greater spatial coverage and showed less cooling during the Ice Age than most previous studies.

High sensitivity climate models – more than 6 degrees – suggest that the low levels of atmospheric CO2 during the Last Glacial Maximum would result in a “runaway effect” that would have left the Earth completely ice-covered.

“Clearly, that didn't happen,” Schmittner said. “Though the Earth then was covered by much more ice and snow than it is today, the ice sheets didn't extend beyond latitudes of about 40 degrees, and the tropics and subtropics were largely ice-free – except at high altitudes. These high-sensitivity models overestimate cooling.”

On the other hand, models with low climate sensitivity – less than 1.3 degrees – underestimate the cooling almost everywhere at the Last Glacial Maximum, the researchers say. The closest match, with a much lower degree of uncertainty than most other studies, suggests climate sensitivity is about 2.4 degrees.

However, uncertainty levels may be underestimated because the model simulations did not take into account uncertainties arising from how cloud changes reflect sunlight, Schmittner said.

Reconstructing sea and land surface temperatures from 21,000 years ago is a complex task involving the examination of ices cores, bore holes, fossils of marine and terrestrial organisms, seafloor sediments and other factors. Sediment cores, for example, contain different biological assemblages found in different temperature regimes and can be used to infer past temperatures based on analogs in modern ocean conditions.

“When we first looked at the paleoclimatic data, I was struck by the small cooling of the ocean,” Schmittner said. “On average, the ocean was only about two degrees (Celsius) cooler than it is today, yet the planet was completely different – huge ice sheets over North America and northern Europe, more sea ice and snow, different vegetation, lower sea levels and more dust in the air.

“It shows that even very small changes in the ocean's surface temperature can have an enormous impact elsewhere, particularly over land areas at mid- to high-latitudes,” he added.

Schmittner said continued unabated fossil fuel use could lead to similar warming of the sea surface as reconstruction shows happened between the Last Glacial Maximum and today.

“Hence, drastic changes over land can be expected,” he said. “However, our study implies that we still have time to prevent that from happening, if we make a concerted effort to change course soon.”

Other authors on the study include Peter Clark and Alan Mix of OSU; Nathan Urban, Princeton University; Jeremy Shakun, Harvard University; Natalie Mahowald, Cornell University; Patrick Bartlein, University of Oregon; and Antoni Rosell-Mele, University of Barcelona.

Media Contact

More Information:

http://www.oregonstate.eduAll latest news from the category: Earth Sciences

Earth Sciences (also referred to as Geosciences), which deals with basic issues surrounding our planet, plays a vital role in the area of energy and raw materials supply.

Earth Sciences comprises subjects such as geology, geography, geological informatics, paleontology, mineralogy, petrography, crystallography, geophysics, geodesy, glaciology, cartography, photogrammetry, meteorology and seismology, early-warning systems, earthquake research and polar research.

Newest articles

Bringing bio-inspired robots to life

Nebraska researcher Eric Markvicka gets NSF CAREER Award to pursue manufacture of novel materials for soft robotics and stretchable electronics. Engineers are increasingly eager to develop robots that mimic the…

Bella moths use poison to attract mates

Scientists are closer to finding out how. Pyrrolizidine alkaloids are as bitter and toxic as they are hard to pronounce. They’re produced by several different types of plants and are…

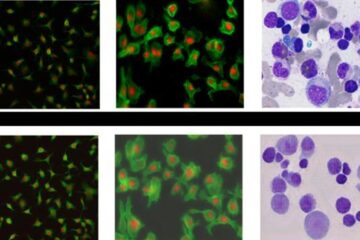

AI tool creates ‘synthetic’ images of cells

…for enhanced microscopy analysis. Observing individual cells through microscopes can reveal a range of important cell biological phenomena that frequently play a role in human diseases, but the process of…