Digital photos can animate a face so it ages and moves before your eyes

Researchers at the University of Washington have created a way to take hundreds or thousands of digital portraits and in seconds create an animation of the person's face.

The tool can make a face appear to age over time, or choose images from the same period to make the person's expression gradually change from a smile to a frown.

The researchers were inspired, in part, by people who snap a photo of themselves each day and then align them to create a movie where they appear to age onscreen. They sought an automated way to get the same effect.

“I have 10,000 photos of my 5-year-old son, taken over every possible expression,” said co-author Steve Seitz, a UW professor of computer science and engineering and engineer in Google's Seattle office. “I would like to visualize how he changes over time, be able to see all the expressions he makes, be able to see him in 3-D or animate him from the photos.”

Lead author Ira Kemelmacher-Shlizerman, a UW postdoctoral researcher in computer science and engineering, will present the research next week in Vancouver, B.C., at the meeting of SIGGRAPH, the Special Interest Group on Graphics and Interactive Techniques.

“The vast majority of photos include faces – family, friends, kids, people who are close to us,” Kemelmacher-Shlizerman said.

The new project is in the same spirit as earlier UW research that automatically stitched together tourist photos of buildings to recreate an entire scene in 3-D. That work led to Microsoft's Photosynth. Faces present additional challenges, Kemelmacher-Shlizerman said, because they move, change and age over time.

Luckily, face detection technology is improving. Picasa and iPhoto added face-recognition tools a few years ago; Windows Live Photo Gallery and, most recently, Facebook, can now automatically tag photos with people's names.

“This work provides a motivation for tagging,” Seitz said. “The bigger goal is to figure out how to browse and organize your photo collection. I think this is just one initial step toward that bigger goal.”

The software starts with photos from the web or personal collections that are tagged with the same person. It locates the face and major features, then aligns the faces and chooses photos with similar expressions so the transitions are smooth. The tool uses a standard cross-dissolve, or fade, between images, which the researchers discovered can produce a surprisingly smooth transition that gives the appearance of motion.

An example video uses photos of a Google employee's daughter taken from birth to age twenty. The owner scanned the older photos to create digital versions, tagged them with the subject's name and manually added the dates. The result is a movie in which the subject ages two decades in less than a minute.

For modern babies, who are digitally chronicled from before birth, such films will be much easier to create.

One version of the tool is already available to the public. Last year during a six-month internship at Google's Seattle office, co-author Rahul Garg, a UW doctoral student in computer science and engineering, worked with Kemelmacher-Shlizerman and Seitz to add a feature called Face Movie to the company's photo tool, Picasa.

The Face Movie version includes some simplifications to make it run more quickly. It also plays every photo tagged with the person's name, but not necessarily in chronological order.

The upcoming talk will be the first academic presentation of the research, which has potential applications in the growing overlap between real and digital experiences.

“There's been a lot of interest in the computer vision community in modeling faces, but almost all of the projects focus on specially acquired photos, taken under carefully controlled conditions,” Seitz said. “This is one of the first papers to focus on unstructured photo collections, taken under different conditions, of the type that you would find in iPhoto or Facebook.”

Related research by Kemelmacher-Shlizerman and Seitz, to be presented this fall at the International Conference on Computer Vision, goes one step further, harnessing personal photos to build a 3-D model of a face. Such models could be used to create more realistic avatars, simplify transmission of people's faces during video conferencing, or develop better techniques for recognizing faces that appear in digital photos.

Eli Shechtman at Adobe Systems is a co-author on the paper presented this month. The research was funded by Google Inc., Microsoft Corp., Adobe Systems Inc. and the National Science Foundation.

For more information, contact Kemelmacher-Shlizerman at kemelmi@cs.washington.edu or 206-543-6876 and Seitz at seitz@cs.washington.edu or 206-616-9431.

Media Contact

More Information:

http://www.uw.eduAll latest news from the category: Communications Media

Engineering and research-driven innovations in the field of communications are addressed here, in addition to business developments in the field of media-wide communications.

innovations-report offers informative reports and articles related to interactive media, media management, digital television, E-business, online advertising and information and communications technologies.

Newest articles

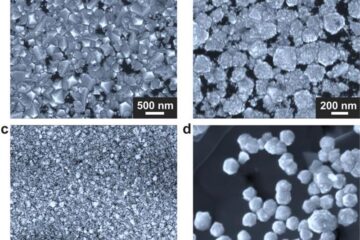

Making diamonds at ambient pressure

Scientists develop novel liquid metal alloy system to synthesize diamond under moderate conditions. Did you know that 99% of synthetic diamonds are currently produced using high-pressure and high-temperature (HPHT) methods?[2]…

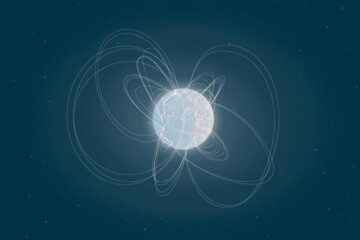

Eruption of mega-magnetic star lights up nearby galaxy

Thanks to ESA satellites, an international team including UNIGE researchers has detected a giant eruption coming from a magnetar, an extremely magnetic neutron star. While ESA’s satellite INTEGRAL was observing…

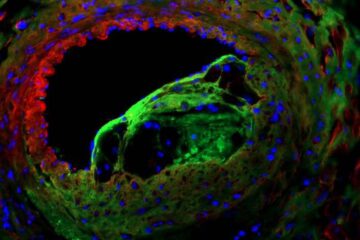

Solving the riddle of the sphingolipids in coronary artery disease

Weill Cornell Medicine investigators have uncovered a way to unleash in blood vessels the protective effects of a type of fat-related molecule known as a sphingolipid, suggesting a promising new…