The Phosphorus Index: Changes afoot

A special section being published next month in the Journal of Environmental Quality addresses that question. The collection of papers grew out of a symposium at the American Society of Agronomy, Crop Science Society of America, and Soil Science Society of America 2011 Annual Meetings.

The section acknowledges the problems that have been encountered with P Index development and implementation, such as inconsistencies between state indices, and also suggests ways in which the indices can be tested against data or models to improve risk assessment and shape future indices.

The P Index was proposed in a 1992 symposium after people became aware of the environmental impacts of P loss from fields. Many farmers were applying manure or other biosolids to their fields at rates that over-applied P. Researchers realized that assessing the risk of P loss from those products was important to protect water quality.

The P Index tool was needed to connect various conditions because P loss is influenced by both site characteristics (e.g., soil test levels, connectivity to water) and the sources of P applied (e.g., inorganic fertilizer, organic sources). It was therefore a great improvement over the use of agronomic soil testing for P risk assessment.

“The objective of the original P Index was to identify fields that had high risk of P loss and then guide producers' decisions on implementing best management practices,” says Nathan Nelson, ASA and SSSA member and co-author of the special section's introductory paper. “The P Index has developed into a widely used tool to identify appropriate management practices for P application and fields suitable for such application.”

The original 1993 paper by Lemunyon and Gilbert laid out three short-term objectives for the P Index: 1) to develop a procedure to assess the risk for P leaving a site and traveling toward a water body; 2) to develop a method of identifying critical parameters that influence P loss; and 3) to select management practices that would decrease a site's vulnerability to P loss.

These objectives were to be met using fairly simple calculations that took into account both source factors and transport factors. Source factors included levels of P in the soil, rates of P fertilization, and methods or timing of P addition. Features such as soil erosion, runoff, and distance to streams composed the transport factors.

“P loss is high when you have both a lot of P present and an easy transport pathway,” explains Nelson. “The index has been designed to evaluate the interaction between these different factors.”

Because the P Index can be used to guide conservation practices, the USDA-National Resource Conservation Service (NRCS) adopted it as part of their management planning process. The NRCS, then, left it up to each state to develop their own P Index best suited for their environments and concerns.

“The P Index was meant to be something that could be easily computed with readily available data, so an NRCS agent would be able to obtain the necessary inputs,” says Nelson. “But there are many different factors that influence P loss as you move from one physiographic region to the next. The differences in transport processes, soils, and landscapes in each state have led to 48 different versions of the P Index, and some of them are very different.”

The inconsistencies of indices across states, along with a perceived lack of improvement in water quality in some regions, are now bringing the accuracy of the P Index into question. With different calculations in place, a set of factors may be categorized as low risk in one state and medium, or even high, risk in another. These discrepancies become especially obvious along state borders.

Researchers understand the need to improve P indices and have made it a priority to base any changes on sound scientific data. Efforts to preserve, evaluate, and improve the P index led the NRCS to release a Request for Proposals within the Conservation Innovation Grant Program. Three regional efforts were funded to evaluate and improve the indices in the Heartland, the Southern State, and the Chesapeake Bay regions of the U.S. Additionally, a national coordination project and two other state-level efforts (Ohio and Wisconsin) were recently funded through the Conservation Innovation Program.

While the final suggestions for the next generation of the P Index are likely a few years off, the research is currently underway. Due to variations in regional characteristics and the problems previously encountered by state boundaries, it is likely that suggestions for improved indices will be based on regional distinctions, Nelson says. The objective is that the evaluations will lead to optimized P indices and better management tools that accurately incorporate site and source characteristics to predict the risk of P loss from fields.

“The scientific community backs the P Index as the best method to assess P loss risk,” says Nelson. “The challenge now is to develop consistency in P indices across state boundaries and quantify the accuracy of P index risk assessments.”

The full article is available for no charge for 30 days following the date of this summary. View the abstract at https://www.agronomy.org/publications/jeq/abstracts/41/6/1703.

The Journal of Environmental Quality is a peer-reviewed, international journal of environmental quality in natural and agricultural ecosystems published six times a year by the American Society of Agronomy (ASA), Crop Science Society of America (CSSA), and the Soil Science Society of America (SSSA). The Journal of Environmental Quality covers various aspects of anthropogenic impacts on the environment, including terrestrial, atmospheric, and aquatic systems.

Media Contact

More Information:

http://www.agronomy.org/publications/jeq/abstracts/41/6/1703All latest news from the category: Agricultural and Forestry Science

Newest articles

Silicon Carbide Innovation Alliance to drive industrial-scale semiconductor work

Known for its ability to withstand extreme environments and high voltages, silicon carbide (SiC) is a semiconducting material made up of silicon and carbon atoms arranged into crystals that is…

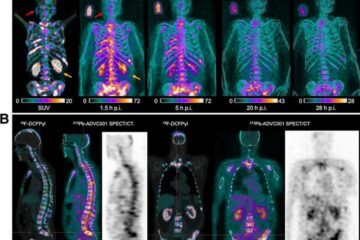

New SPECT/CT technique shows impressive biomarker identification

…offers increased access for prostate cancer patients. A novel SPECT/CT acquisition method can accurately detect radiopharmaceutical biodistribution in a convenient manner for prostate cancer patients, opening the door for more…

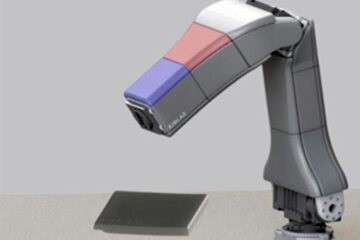

How 3D printers can give robots a soft touch

Soft skin coverings and touch sensors have emerged as a promising feature for robots that are both safer and more intuitive for human interaction, but they are expensive and difficult…